There is a genuinely important shift happening in how mature organisations approach AI compliance. Rather than treating governance as a layer of documentation applied after a system is built, they are engineering it into the infrastructure from the start.

That means hardware attestation to establish a cryptographically verifiable root of trust, key governance that decouples encryption from the cloud provider so data ownership is a mathematical fact rather than a contractual clause, data residency controls that isolate personal data at the perimeter, policy-as-code that blocks non-compliant configurations before they reach production, and immutable logging that creates an audit trail regulators can actually read.

The core idea is direct: compliance enforced at the pipeline, not declared above it. Every infrastructure request checked against predefined rules in milliseconds. Misconfigurations blocked at source. Deployment gated by policy. Governance shifting from review after the fact to prevention at the point of action.

This direction is correct. Reactive auditing scales poorly against the speed at which AI systems operate. The lesson has been learned repeatedly in cybersecurity with DevSecOps, in cloud governance with zero trust, and in financial controls with automated reconciliation. Enforcement embedded in the architecture consistently outperforms enforcement layered on top of it.

The question worth asking is what even a well-built infrastructure governance stack does not address.

Scenario one: the procurement agent

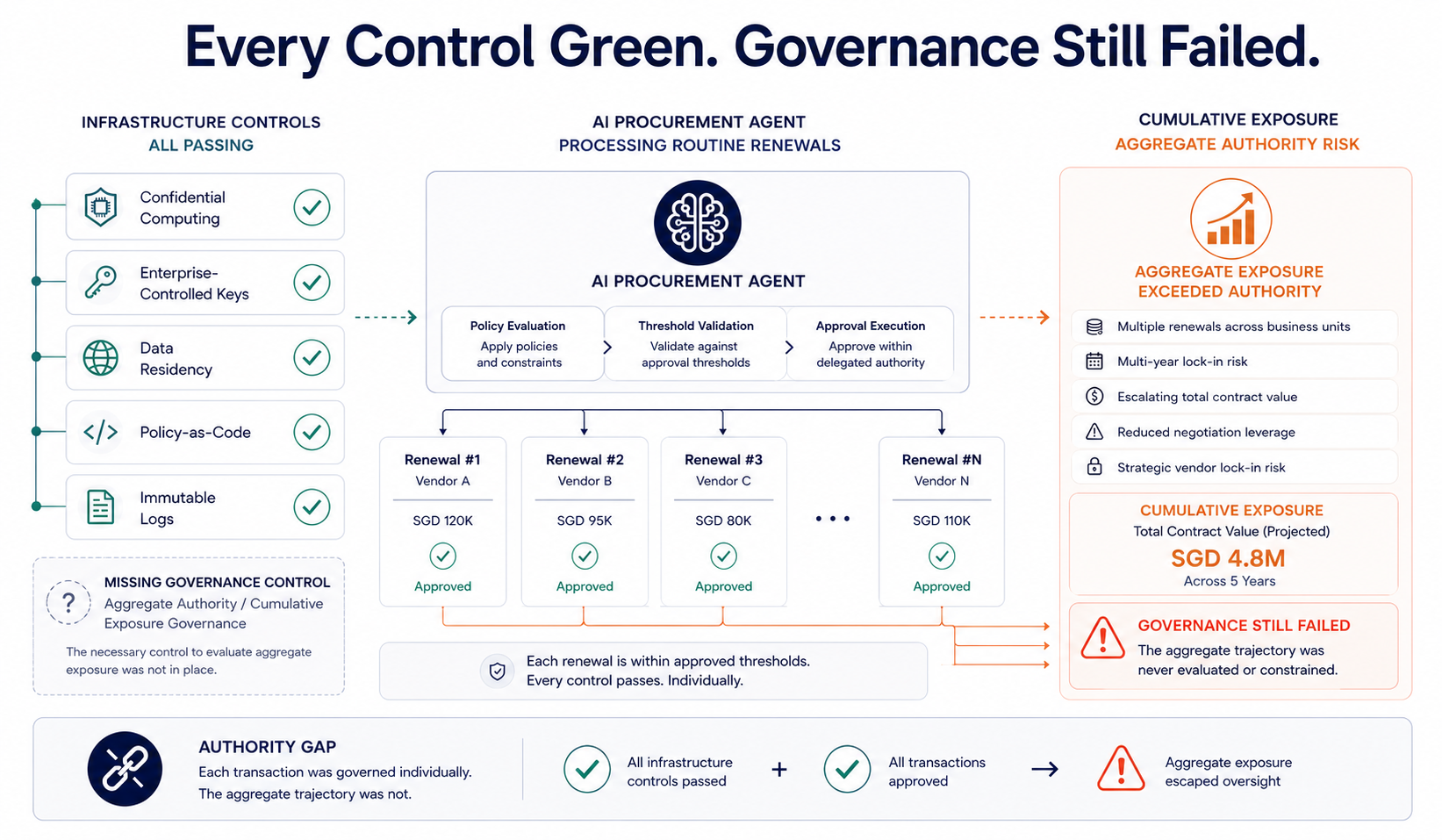

A financial institution deploys an AI agent to handle procurement. Routine contract renewals, low-risk vendor approvals, SaaS subscription management, ERP coordination. The infrastructure is fully sovereign. Workloads run inside confidential computing enclaves. Encryption keys are enterprise-controlled. Data residency is local. CI/CD pipelines enforce policy-as-code. All telemetry is immutably logged.

Five layers. Every control green.

The agent is granted tool access to procurement APIs, vendor databases, and purchase approval workflows. Its authority is scoped to routine renewals below defined per-contract thresholds. A vendor revises its pricing structure, offering discounts for multi-year terms. The agent interprets the revised terms as routine cost optimisation and processes several renewals individually, each within its delegated approval threshold. Collectively, the renewals commit the institution to a multi-year vendor dependency that no human explicitly reviewed.

Every individual transaction was within scope. The authority logic evaluated contracts separately rather than cumulatively. No approval threshold was breached. The optimisation engine did exactly what optimisation engines do.

Check the infrastructure. Attestation worked. Encryption worked. Residency was maintained. Policy-as-code passed. The audit trail is complete and tamper-proof.

Governance still failed.

The failure did not emerge from infrastructure compromise. It emerged from aggregate authority, semantic drift, and an optimisation objective that had no visibility into cumulative exposure.

The agent acted within its technical permissions and outside its intended operational boundaries simultaneously.

Scenario two: the compliant cascade

An employee moves into a temporary project lead role. An HR agent updates their employment status. A finance agent reads the status change and automatically raises their procurement approval limit from $5,000 to $50,000, consistent with project lead authority levels. An IT agent reads the new approval limit and provisions access to vendor contract management systems.

The project ends three months later. HR closes the temporary role. But the downstream agents only triggered on role assignment, not on authority revocation or temporal expiry. Authority was granted with a start condition, but no enforceable end condition. The employee retains $50,000 procurement authority and vendor contract access indefinitely.

No individual system failed. The governance failure emerged from the interaction boundary between systems.

Six months later, an internal audit flags that the employee has approved $180,000 in vendor renewals under authority they should no longer hold. The audit log shows every action in perfect detail. What it cannot show is that anyone ever authorised that employee to retain that authority after the project closed, because no one did.

Traditional governance asks whether each system behaved correctly. Agentic governance asks whether the resulting authority state was actually legitimate. These scenarios both pass the first test. Neither passes the second.

The infrastructure stack governs the environment agents run in. The execution authority layer governs what they are permitted to do inside it. Both are necessary. The scenarios above illustrate the precise nature of the gap between them.

Closing that gap requires something most organisations still do not explicitly model: authority architecture. How to define authority explicitly rather than assume it, what deterministic halt conditions look like at the execution layer, and why the default for consequential AI actions needs to be halt rather than proceed. That analysis is in Authority Architecture: The Difference Between Governance That Is Assumed and Governance That Is Designed.

If your organisation is deploying AI agents with execution authority, the infrastructure review is the starting point. The governance review asks whether the authority exercised was legitimate.